In March 2021, all projects in the metrics theme have prepared a summary of their activities. This summary complements the newsletter sent our to all our partners.

ACoRA: Automated Code Review Assistant

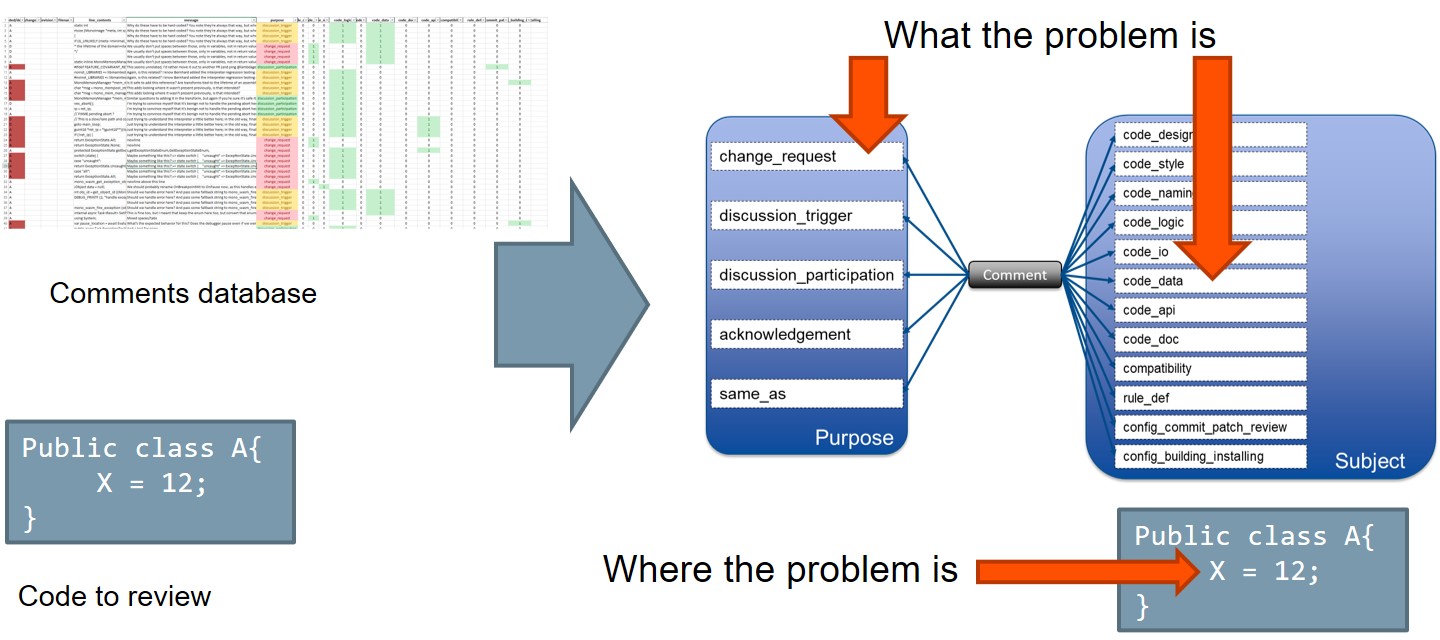

Modern Code Reviews became an integral part of software delivery and deployment pipeline. However, as the speed of software development increases, the manual code reviews can become a bottleneck – with the increased size of committed code, the effort for manual reviews grows exponentially. ACoRA (Automated Code Review Assistant) is a tool that identifies code fragments that need to be manually reviewed, shows potential problems that these code fragments can cause, and provides examples of similar problems. ACoRA is trained on the company source code, from its previous code reviews in e.g. Gerrit, and GitHub.

ACoRA provides the value for the company in terms of:

- Increased speed of modern code reviews

- Automation of company-specific coding guidelines

- Classification of review comments

- Knowledge transfer between the company’s organizations (learning by example)

link to recording from lunch seminar

Modeling Data Anomalies

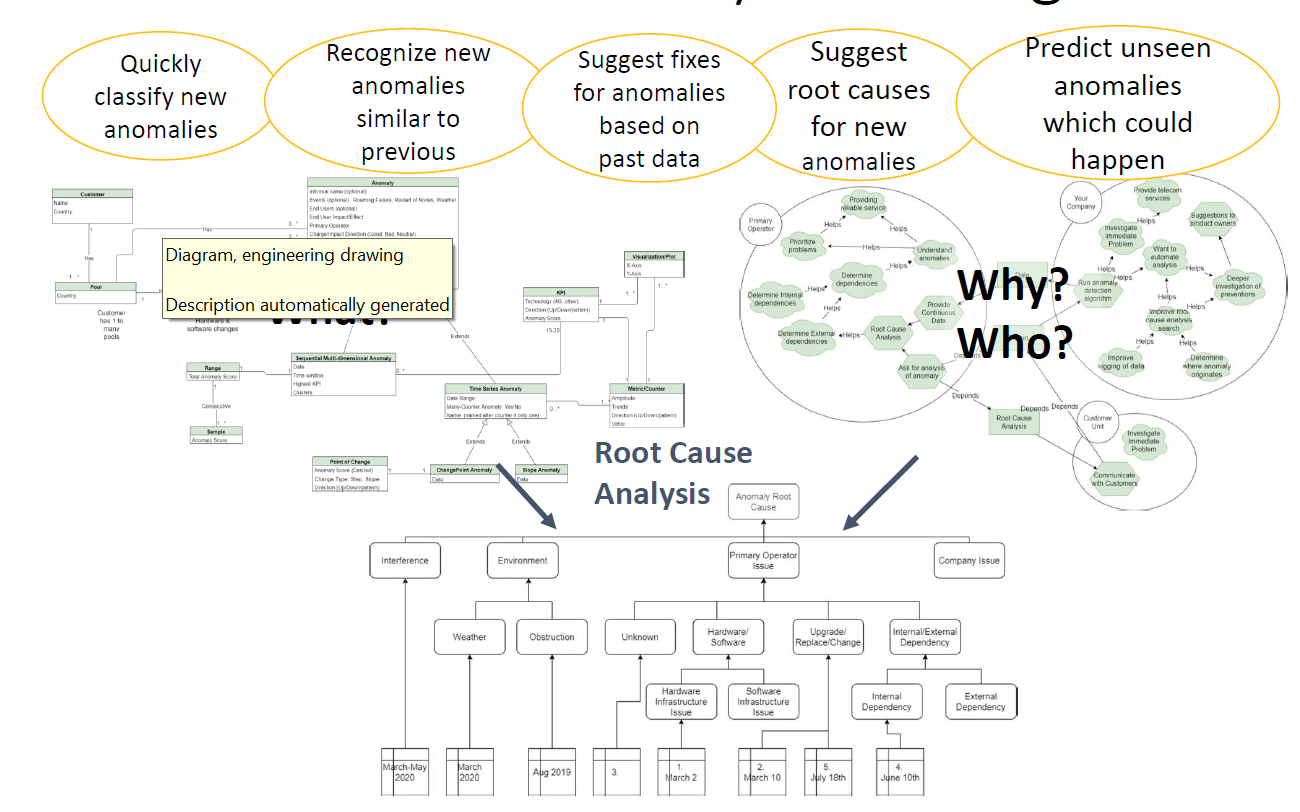

As we collect large amounts of industrial data, anomaly analysis allows to detect, analyze and understand anomalous or unusual data patterns. This is an important activity to understand, for example, deviations in service which may indicate potential problems, or differing customer behavior which may reveal new business opportunities. Existing work on anomaly detecting often fail to detect or support understanding of the root causes of data anomalies. In this project, we have worked with two teams at Ericsson to model and understand the attributes and root causes of data anomalies. After iteration, we have created general and anomaly-specific UML class diagrams and goal models to capture anomaly details. We use our experiences to create an example taxonomy, classifying anomalies by their root causes, and to create a general method for modeling and understanding data anomalies.

Benefits:

- better understanding of anomalies and their root causes

- easier classification into patterns of anomalies

- can lead to faster recommendations for fixes or responses

- working towards creating a training set which may be used for machine learning approaches

Establishing a metrics language

Two years ago, a dedicated metrics team was formed at Axis to support the R&D organization. Since then, the team has grown continuously, comprising today 20 members. The team had, in this short period of time, to build up an advanced infrastructure to enable the efficient delivery of measurement systems. Focus was also put on becoming a high-performance team, to address the all-increasing demands from its organization. In parallel, the team had to help elevate the metrics competence of its organization.

Putting in place an advanced measurement program under these conditions is rather difficult and poses several challenges. A key success factor is communication – i.e., the establishment of a metrics specific language. A metrics language enables (among other) the organization to communicate its measurement needs to the metrics team, and for the metrics team to successfully translate these needs into information products (e.g., Kibana/Grafana and Qlik dashboards).

The metrics team, with the help of experts in both communication and metrics, set to establish a metrics language last sprint. Several workshops were held, both internally at Axis and with participants from other Software Center companies. The goal then was to establish a metrics language between stakeholders and the metrics team. During this sprint, the work continues with the goal to expand the work to the whole of Axis.

Metrics team maturity model

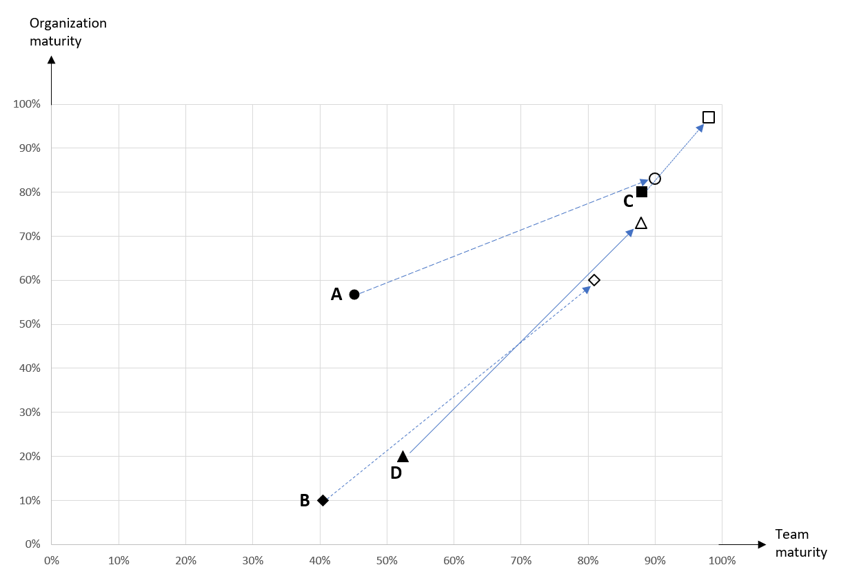

Measurements, KPIs, predictions, early warnings, big data, data analytics, machine learning, and AI are on the lips of everyone today. Modern, high performing software development organizations work intensively to address these topics by establishing dedicated, professional metrics teams.

These teams comprise different roles, e.g., measurement designers, database administrators, and data analysts. They work close with their organizations, addressing their information needs. The outcome of the work of the metrics teams (simplified) is deliverance of information products (e.g., MS Excel files, and dashboards), and the elevation of the metrics competence of their organizations.

Given the increasing and crucial role of the metrics teams in the successful operation of today’s organizations, we developed a tool to assess the performance of the metrics teams. The tool is in the form of a checklist. It covers all aspects of a successful, highly performing metrics team, as well as the metrics maturity of their organizations.

The project provides the following value to the companies:

- Understanding the roles and responsibilities of the metrics teams and their organizations

- Increasing the organizations’ ability to define and use information products delivered by the metrics teams

- Measurement-based improvement of organizational and product performance

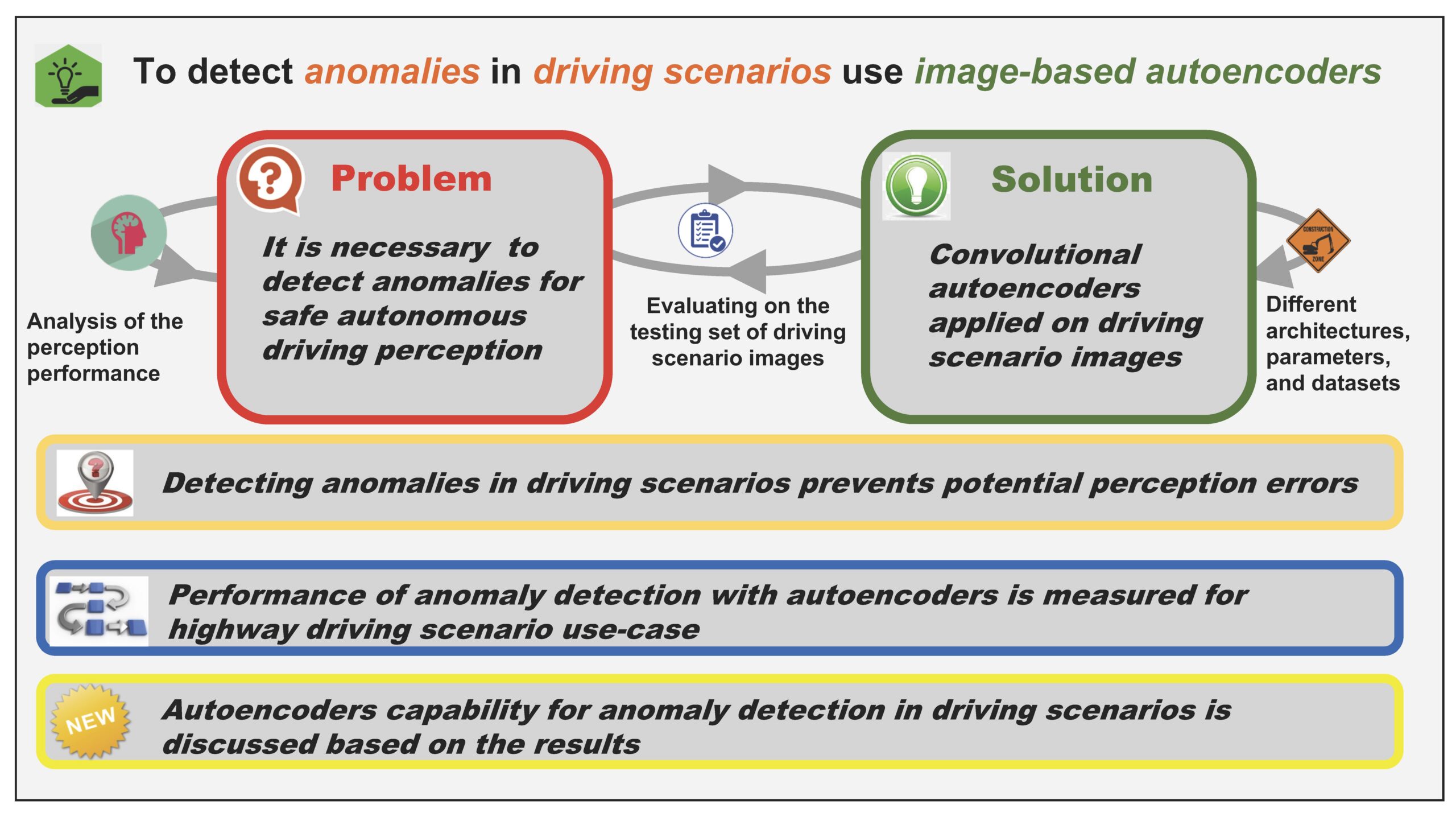

Anomaly Detection in Driving Scenarios with Autoencoders

Image-based autoencoders can be used for anomaly detection in driving scenarios that can help to prevent potential perception errors for autonomous driving. Autoencoders do not require labeled data and can be used independently of the actual perception algorithm. In our project, we train and evaluate convolutional autoencoders on simulated highway driving scenario images. Measured anomaly detection performance with different architectures, training parameters, approaches, and datasets allows us to estimate autoencoders’ capability for anomaly detection in driving scenarios. Automotive engineers can take this project’s results into account when developing next-generation autonomous driving perception systems.

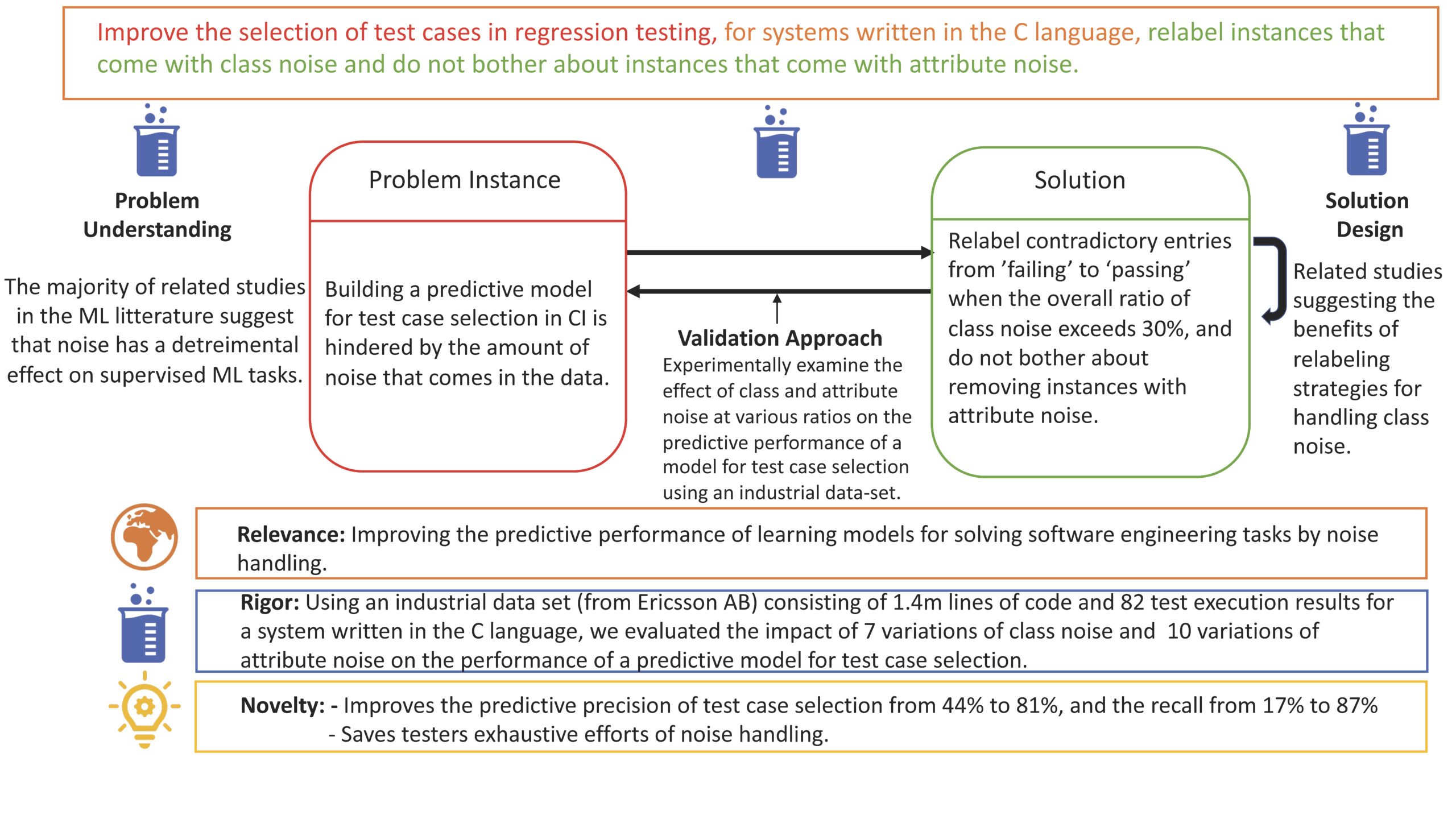

Noise detection in ML

link to recording from the lunch seminar

Communication

Software development require close collaboration between teams and stakeholders. Within this context, communication is an important tool for building sustainable relationships. Lack of communication can lead to delivery delays as well as affecting trust between teams and stakeholders.

On this basis, we set out to explore how it is possible to resolve communication challenges that metrics teams experience in communication with stakeholders. We had a unique opportunity to carry out the field research at Axis and Ericsson. The plan is to involve other Software Center companies, to validate and extend the findings, in the next coming sprints.

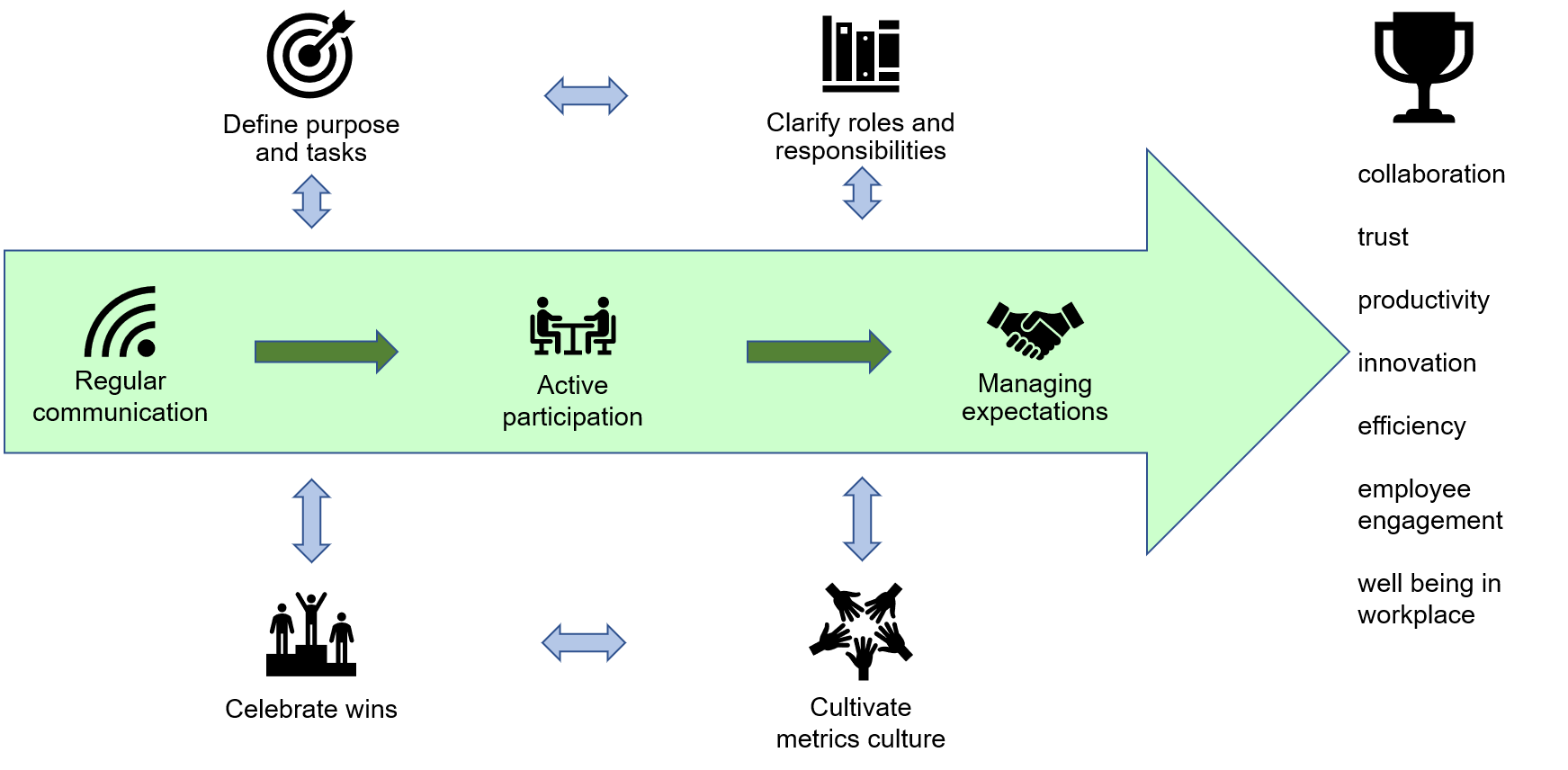

The outcomes so far are illustrated in the figure, which summarizes the identified communication challenges experienced in metrics delivery, including:

- Aligning Metrics Teams – Stakeholders expectations

- Prioritizing demands

- Regular feedback

- Continuous dialogue